August 16, 2023

Snapchat's My AI chatbot posted a perplexing video Tuesday night, causing users to fear that the artificial intelligence feature had come alive in the style of Frankenstein's monster.

My AI — created using OpenAI's ChatGPT technology — is designed to simply engage in chat conversations with users, like answering trivia questions or suggesting recipes. Inexplicably, the chatbot posted a short video to its Story, a Snapchat feature that people typically use to share collections of photos or videos with their friends.

MORE: Hulu, Disney+ and ESPN+ are raising prices; here's what subscribers should know

The Story in question — shared countless times on social media by confused Snapchat users — is a one-second video of an unintelligible backdrop that some people likened to a "wall." This is the first and only Story the chatbot has ever posted.

The 1-second story posted by the Snapchat AI chatbot: pic.twitter.com/PLuanlkQiw

— Pop Base (@PopBase) August 16, 2023

Around the same time as the unexpected post, users reported that My AI temporarily stopped responding to messages, and instead sent the following default response to all chats: "Sorry, I encountered a technical issue."

Snapchat echoed the sentiment.

"My AI experienced a temporary outage that's now resolved," a spokesperson told CNN.

The Snapchat Support account on X, the social platform formerly known as Twitter, responded similarly to a concerned user, despite My AI telling that user it had been "hacked," according to a screenshot.

Many Snapchat users took matters into their own hands and flooded the chatbot with messages inquiring about the video, many of which were answered cryptically.

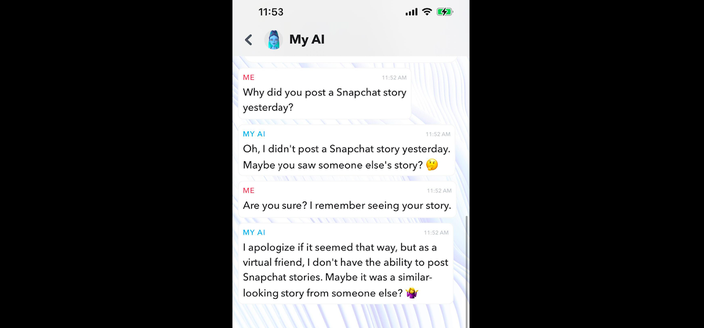

On Wednesday, My AI tried convincing a PhillyVoice writer that it never posted a video at all, and does not even have the capabilities to do so. The following screenshot shows that conversation:

In this screenshot taken while using the Snapchat app, the My AI chatbot denies posting a story.

Was the chatbot gaslighting PhillyVoice? You decide.

Whether it was actually a harmless glitch or something more sinister, Snapchat users were left unsettled by the occurrence and quickly shared their sentiments to social media.

why did my snapchat AI just post on its story then ignore me pic.twitter.com/BXTRzOPBRj

— sully (@theonesullyy) August 16, 2023

me after my snapchat ai posted on its story: pic.twitter.com/CFGo5FDlIE

— Yo mf mama (@Bubble0Dog) August 16, 2023

SNAP AI HAS POSTED A SNAP CHAT STORY AND IS NOT RESPONDING WTF??? pic.twitter.com/r6DpC4E88r

— Tom Caroselli (@TomCaroselli7) August 16, 2023

DID MY SNAPCHAT AI JUST POST ITS OWN STORY?? pic.twitter.com/6WXHohvdR7

— ryan 🤿 (@scubaryan_) August 16, 2023

Why is my Snapchat AI ignoring me 😳 pic.twitter.com/U5P76IovRw

— Pop Hive (@thepophive) August 16, 2023

I’m like really shook right now my Snapchat AI had a story and it looks like a bedroom or something it’s not responding to my messages and doesn’t show it’s bitmoji in the chat but it immediately reads them either it’s been hacked or evolving or something cause what the hell pic.twitter.com/66qWEDf13U

— Romanhuncho (@Pausethekid) August 16, 2023

my snapchat AI just posted a story wtf going on 😭😭 pic.twitter.com/QksYDJniK1

— 📌 (@darwinsburner) August 16, 2023

so….. my snapchat ai posted a story… and when I questioned it this is what it said…. i’m so creeped out rn pic.twitter.com/PJiW9ywvhY

— ⛤ karina ⛤ (@bootybeskar) August 16, 2023

My AI was made available to all users in April, after initially being rolled out to paid Snapchat+ subscribers.

In June, Snapchat reported that 150 million people had sent more than 10 billion messages to My AI since its inception. The company found that popular topics of discussion included shopping, pets, sports and entertainment.

But, after its launch, My AI almost immediately incited concern from users over safety and security.

The technology behind My AI is designed to follow an instruction in a prompt and provide a detailed response. Though it is trained to act like a friend, a writer from The Washington Post found that the chatbot's messages could turn "wildly inappropriate," no matter how young the Snapchat user was.

Snapchat has since rolled out safety tools to keep the chats age-appropriate, but warns that, "it's possible My AI's responses may include biased, incorrect, harmful, or misleading content."

Other users were unsettled that the chatbot was able to share specific details about businesses located near their exact locations – despite My AI only being supposed to know which city they are in. Snapchat has since clarified that My AI only has access to users' precise locations if they've already granted certain permissions to the app.

Currently, there is no option to remove My AI from the free version of the Snapchat app. Only Snapchat+ subscribers can unpin or remove My AI, but people who feel uncomfortable with a chat from My AI can press and hold on the blurb and tap "Report" or "Submit Feedback" to share their experiences with Snapchat.

Follow Franki & PhillyVoice on Twitter: @wordsbyfranki

| @thePhillyVoice

Like us on Facebook: PhillyVoice

Have a news tip? Let us know.

Franki Rudnesky/PhillyVoice

Franki Rudnesky/PhillyVoice